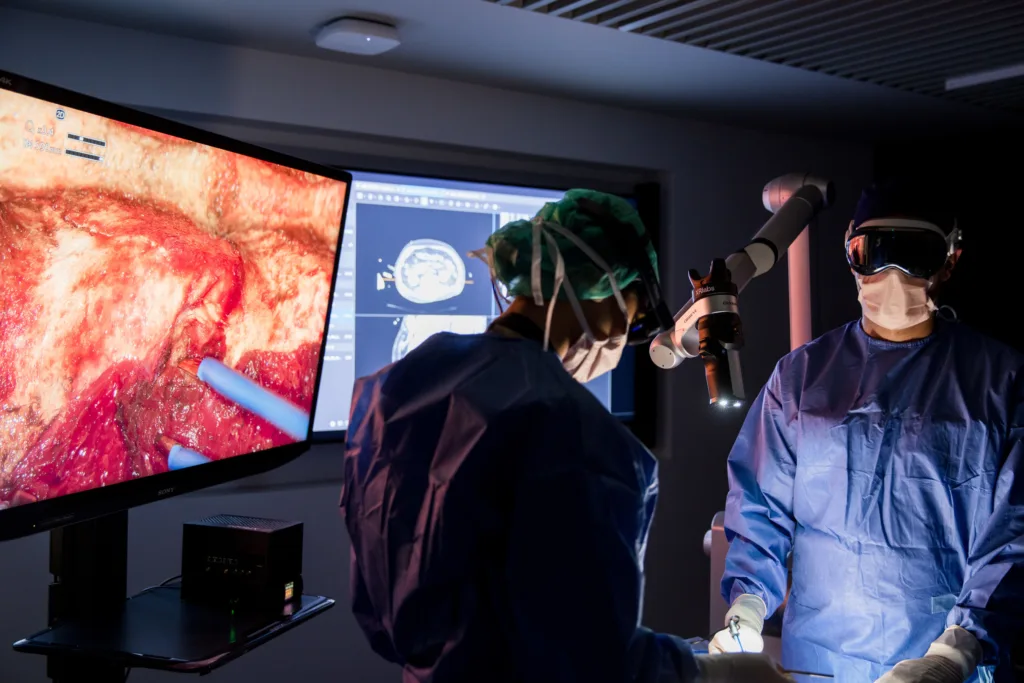

XRlabs has disclosed it is integrating NVIDIA Jetson Thor and NVIDIA Isaac for Healthcare to develop an AI-powered retrofit system for surgical visualisation tools, aiming to deliver real-time perception, tool tracking, and intent-aware automation for neurosurgery and other minimally invasive procedures. The system is currently under early-stage clinical evaluation.

Unlike traditional surgical robotics or integrated imaging suites that require expensive overhauls of the operating room (OR), the system being developed by XRlabs is positioned as a modular, device-agnostic “surgical intelligence retrofit.” It is designed to bolt onto existing surgical exoscopes, microscopes, or other visualisation systems—essentially turning legacy devices into edge AI-enabled smart tools without requiring hardware replacement or workflow overhauls. At the heart of this initiative is XRlabs’ SurgicalOS software stack, now fused with NVIDIA’s low-latency embedded hardware and sim-to-real robotic development ecosystem.

This signals a broader rethinking of how intraoperative AI should be implemented—not through billion-dollar robotic platforms but via edge compute and software layers that preserve surgeon preference and hospital infrastructure constraints.

What this retrofit approach reveals about AI adoption in surgical intelligence platforms

The move by XRlabs to use NVIDIA Jetson Thor and NVIDIA Isaac for Healthcare is not just a hardware or software choice—it is a signal of where surgical AI is going. Most hospitals are still operating with a mix of aging equipment and fragmented digital platforms. In that context, any AI system that mandates full-stack replacement will face long cycles and capital resistance. XRlabs’ approach flips this logic: start with what hospitals already have, and make it smarter.

The use of Jetson Thor, NVIDIA’s latest embedded AI system-on-module, allows high-performance, low-latency video perception at the edge, which is critical in high-risk, time-sensitive procedures such as neurosurgery. Meanwhile, NVIDIA Isaac provides a simulation-to-reality development framework that helps XRlabs build, test, and refine its AI models before deployment—mitigating one of the largest bottlenecks in surgical robotics, where moving from lab to live OR remains fraught with safety, regulatory, and fidelity challenges.

Industry analysts note that few medtech startups have effectively tackled the retrofit angle in surgical AI. Most have chosen to build full-stack platforms—an approach that garners investor attention but often struggles with real-world implementation. XRlabs is betting that simulation-trained, plug-in intelligence will be easier to validate, scale, and clinically integrate than robot-first solutions.

What this changes for intraoperative decision support and surgeon–AI co-pilot models

If XRlabs’ platform succeeds in its core promise—tool awareness, automated framing, and real-time decision augmentation—it could reshape how surgical support systems are defined. Today’s AI-in-surgery landscape is fragmented. Companies like Caresyntax, Activ Surgical, and Theator have focused on data analytics, video indexing, and procedural benchmarking post-surgery or during simulation. Others, like Intuitive Surgical or Medtronic, embed AI deeper into their robotic systems.

XRlabs aims to fill a different gap: active intelligence during the surgery, delivered via retrofitted scopes with low-latency edge compute. By transforming the live video feed into an interface that tracks instruments and responds contextually—while still fitting into OR workflows—it is attempting to function as a cognitive co-pilot that does not require the surgeon to break stride.

Clinicians tracking the field note that for AI co-pilots to gain traction, they must offer utility without distraction. The fact that XRlabs is conducting early-stage neurosurgical evaluation—one of the most precision-driven fields—suggests the company is aiming to prove its usefulness in the most unforgiving clinical context first.

What could delay adoption: validation, standardisation, and integration inertia

Despite the promise of a modular retrofit model, several implementation risks remain. Regulatory clearance for real-time surgical decision support tools is non-trivial—particularly when automation features are involved. Even intent-aware framing, if it actively influences what the surgeon sees, could be interpreted as altering the surgical experience and may require high evidence thresholds for approval.

There is also the challenge of integration fatigue. Hospital IT departments, device procurement teams, and OR staff are already overloaded with digital tools and systems that promise seamless integration but often result in complexity creep. For SurgicalOS to scale, XRlabs will need to show that it can reliably plug into diverse surgical visualisation devices and electronic health records (EHRs), while keeping latency, data security, and uptime within strict tolerances.

Finally, while Jetson Thor offers impressive on-device compute power, hardware supply constraints or overheating concerns in the OR environment may surface during wider deployment. The real-world reliability of the edge AI stack—especially during lengthy or complex surgeries—will be watched closely.

What this implies for NVIDIA’s broader healthcare strategy in surgical robotics and edge AI

XRlabs’ use of NVIDIA’s Jetson Thor and Isaac platform may be a small contract, but it fits squarely into NVIDIA’s growing healthcare robotics thesis. Holoscan, NVIDIA’s real-time sensor processing platform for medical devices, is already being adopted by companies working on next-generation endoscopy, ultrasound, and interventional imaging. The company’s Isaac SDK and Jetson edge platforms now offer an increasingly complete stack for surgical intelligence startups seeking simulation-to-deployment pipelines.

Analysts tracking NVIDIA’s healthcare push suggest that the company is quietly assembling the GPU–simulation–robotics trifecta for surgical startups. By lowering the barrier to building safe, perceptive, and automated surgical systems—without forcing those companies to reinvent the hardware stack—NVIDIA can entrench itself in the operating room just as it did in autonomous vehicles and data centers.

In this light, XRlabs may be one of the early test cases for whether surgical AI can succeed by thinking smaller, modular, and simulation-first.

What industry stakeholders will watch next as the platform moves toward clinical maturity

The biggest watchpoint for XRlabs will be real-world clinical validation. It remains unclear whether its early evaluations are being conducted under Investigational Device Exemption (IDE) protocols or as observational usability studies. Either way, results from neurosurgical integration will be closely monitored by both regulators and potential OEM partners.

Adoption will hinge not only on efficacy, but also on surgeon trust, ease of training, and the ability to demonstrate procedural value—such as reduced error rates, shorter operating times, or improved visualisation in complex cases.

Hospital groups and device manufacturers may also be evaluating the licensing potential of SurgicalOS as an embeddable platform—especially those looking to extend the life of their existing visualisation tools without investing in full-stack robotics.

If XRlabs can prove its retrofit model delivers AI benefits without disrupting OR flow, it may open the door to a new class of surgical co-pilots that do not require billion-dollar buildouts.